Visualize sweep results

Visualize the results of your W&B Sweeps with the W&B App UI. Navigate to the W&B App UI at https://wandb.ai/home. Choose the project that you specified when you initialized a W&B Sweep. You will be redirected to your project workspace. Select the Sweep icon on the left panel (broom icon). From the Sweep UI, select the name of your Sweep from the list.

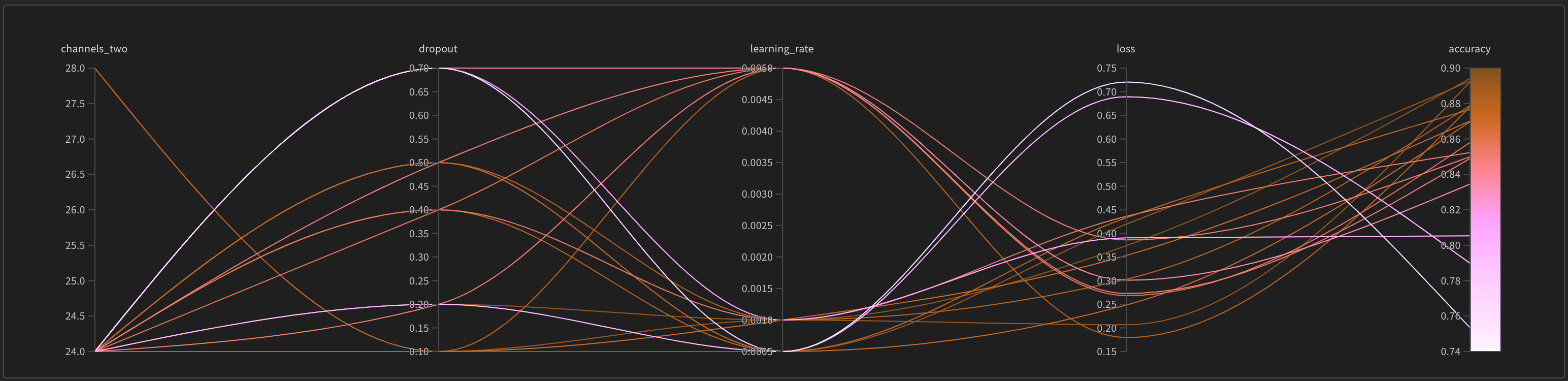

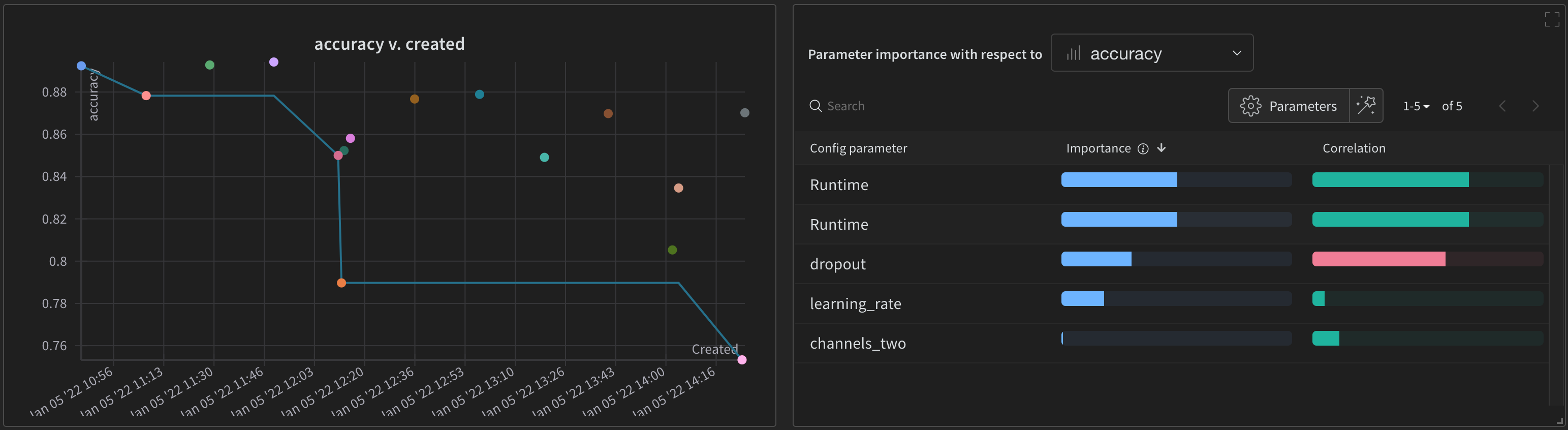

By default, W&B will automatically create a parallel coordinates plot, a parameter importance plot, and a scatter plot when you start a W&B Sweep job.

Parallel coordinates charts summarize the relationship between large numbers of hyperparameters and model metrics at a glance. For more information on parallel coordinates plots, see Parallel coordinates.

The scatter plot(left) compares the W&B Runs that were generated during the Sweep. For more information about scatter plots, see Scatter Plots.

The parameter importance plot(right) lists the hyperparameters that were the best predictors of, and highly correlated to desirable values of your metrics. For more information parameter importance plots, see Parameter Importance.

You can alter the dependent and independent values (x and y axis) that are automatically used. Within each panel there is a pencil icon called Edit panel. Choose Edit panel. A model will appear. Within the modal, you can alter the behavior of the graph.

For more information on all default W&B visualization options, see Panels. See the Data Visualization docs for information on how to create plots from W&B Runs that are not part of a W&B Sweep.